workshops

Workshops I organised and co-organised.

I am very active in organising workshops at robotics and computer vision conferences.

Behaviour Priors in Reinforcement Learning for Robotics (ICRA 2022) The goal of this workshop was to discuss the role that behaviour priors could play in Reinforcement Learning for robotics. This includes the various ways in which we can learn/model these priors, methods to integrate their experience within the RL framework and their applicability to solving some of the key challenges faced by RL for real-world robotics.

Embodied AI Workshop (CVPR 2022) Similar to the 2021 edition of the worksop, the goal of this workshop was to share and discuss the current state of intelligent agents that can: see,talk, listen, act, and reason. The Embodied AI 2021 workshop was held virtually in conjunction with CVPR 2021. It featured a host of invited talks covering a variety of topics in Embodied AI, many exciting challenges, a poster session, and panel discussions. We contributed the Robotic Vision Scene Understanding Challenge

Embodied AI Workshop (CVPR 2021) The goal of this workshop was to share and discuss the current state of intelligent agents that can: see,talk, listen, act, and reason. The Embodied AI 2021 workshop was held virtually in conjunction with CVPR 2021. It featured a host of invited talks covering a variety of topics in Embodied AI, many exciting challenges, a poster session, and panel discussions. We contributed the Robotic Vision Scene Understanding Challenge

Good Citizens of Robotics Research (RSS 2020) This workshop provided a forum for discussions and commentary on topics about research, dissemination, and community, by taking inspiration from Computer Vision research and this excellent CVPR workshop. We discussed important questions like: How to incentivize and do good systems research? How to best disseminate results, code, and hardware? How to be a fair reviewer? How to be a good area chair? How to support a caring and inclusive community? How to bridge the gap between academia and industry and the role of ethics in their interplay? and in general how to go about being a good citizen of robotics research.

Beyond mAP: Reassessing the Evaluation of Object Detectors (ECCV 2020) This workshop assessed current evaluation procedures for object detection, highlights their shortcomings and opens discussion for possible improvements.

Reliable Deployment of Machine Learning for Long-Term Autonomy (IROS 2020) This workshop focused on the problem of long-term autonomy for mobile robots and the challenge of building a reliabile machine learning components in the robotic system that can handle bad sensory data, shifts to abnormal operational conditions, misclassification and detections.

The Importance of Uncertainty in Deep Learning for Robotics (IROS 2019)

In this workshop we discussed the importance of uncertainty in deep learning for robotic applications. The workshop will provided tutorial-style talks that coverd the state-of-the-art of uncertainty quantification in deep learning, specifically Bayesian and non-Bayesian approaches, spanning perception, world-modeling, decision making, and actions. Invited expert speakers discussed the importance of uncertainty in deep learning for robotic perception, but also action.

Robotic Vision Probabilistic Object Detection Challenge (CVPR 2019)

This workshop brought together the participants of the first Robotic Vision Challenge on Probabilistic Object Detection, a new competition targeting both the computer vision and robotics communities. The workshop focussed on outcomes of the competition in the morning session, featuring talks by the four best scoring teams. After that we welcomed our invited speakers in the afternoon. Organised with Feras Dayoub and other colleagues from the Australian Centre for Robotic Vision, and Anelia Angelova from Google AI.

Deep Learning for Semantic Visual Navigation (CVPR 2019)

We discussed ideas to advance visual navigation by combining recent developments in deep and reinforcement learning. A special focus was on approaches that incorporate more semantic information into navigation, and combine visual input with other modalities such as language. Organised with colleagues from Google AI (Alexander Toshav, Anelia Angelova) and Imperial College London (Ronald Clark, Andrew Davison).

New Benchmarks, Metrics, and Competitions for Robotic Learning (RSS 2018)

Similar in scope and topic to the CVPR workshop, here we discussed new benchmarks, competitions, and performance metrics that address the specific challenges arising when deploying (deep) learning in robotics.

Real-World Challenges and New Benchmarks for Deep Learning in Robotic Vision (CVPR 2018)

In this workshop we discussed crucial challenges arising when deploying deep learning methods in real-world robotic applications, and a set of future large scale robotic vision benchmarks to address the critical challenges for robotic perception that are not yet covered by existing computer vision and robotics benchmarks, such as performance in open-set conditions, incremental learning with low-shot techniques, Bayesian optimisation, active learning, and active vision.

Long-term autonomy and deployment of intelligent robots in the real-world (ICRA 2018)

This workshop (led by Feras Dayoub) discussed the challenges of autonomous robots that have to reliably operate for long periods of time while having to demonstrate a high level of robustness and fault tolerance.

Learning for Localization and Mapping (IROS 2017)

The goal of this workshop was to present and discuss developments in learning-based approaches for localization and mapping systems.

Invited speakers: Wolfram Burgard (University of Freiburg), Jana Kosecka (George Mason University), Stefan Leutenegger (Imperial College London), Simon Lynen (Google), Marc Pollefeys (Microsoft).

Organised with Cesar Cadena and Igor Gilitschenski (both ETH Zurich), John Leonard and Sudeep Pillai (both MIT), and Fabio Ramos (University of Sydney)

New Frontiers for Deep Learning in Robotics (RSS 2017)

A wide range of renowned experts discussed deep learning techniques at the frontier of research that are not yet widely adopted, discussed, or well-known in our community. We carefully selected research topics such as Bayesian deep learning, generative models, or deep reinforcement learning for planning and navigation that are of high relevance and potentially groundbreaking for robotic perception, learning, and control. The workshop introduces these techniques to the robotics audience, but also exposes participants from the machine learning community to real-world problems encountered by robotics researchers that apply deep learning in their research.

Invited speakers: Yann LeCun (Facebook, NYU), Yarin Gal (University of Cambridge), Josh Tenenbaum (MIT), David Cox (Harvard), Chelsea Finn (UC Berkeley), Piotr Mirowski (DeepMind), Aaron Courville (Université de Montréal).

Organised with the support of Jürgen Leitner, Michael Milford, Peter Corke (QUT, Brisbane), and Pieter Abbeel (UC Berkeley).

Deep Learning for Robotic Vision (CVPR 2017)

Recent advances in deep learning techniques have made impressive progress in many areas of computer vision, including classification, detection, and segmentation. While all of these areas are relevant to robotics applications, robotics also presents many unique challenges which require new approaches.

Robotic vision specific challenges include the need for real-time analysis, the need for accurate 3d understanding of scenes, and the difficulty of doing experiments at scale. There are also opportunities robotics brings to computer vision, for example the ability to control position and viewing direction of the camera, and to provide a data source for “grounded” learning of concepts, reducing the need for manual labeling.

Invited speakers: Jitendra Malik (UC Berkeley), Raquel Urtasun (U Toronto / Uber ATG), Dieter Fox (U Washington), Honglak Lee (Google Brain / U Michigan), Abhinav Gupta (CMU), Jianxiong Xiao (AutoX), Richard Newcombe (Facebook), Raia Hadsell (Google DeepMind), Ashutosh Saxena (Brain of Things).

Organised with support from Jürgen Leitner, Michael Milford, Ben Upcroft, Peter Corke (QUT, Brisbane), Pieter Abbeel (UC Berkeley), Wolfram Burgard (Uni Freiburg).

Are the Sceptics Right? - Limits and Potentials of Deep Learning in Robotics (RSS 2016)

We analysed why deep learning has not yet had the huge impact in robotics it had in neighboring research disciplines, and especially in computer vision. The workshop will identify the limits and potentials of current deep learning techniques in robotics, and will propose directions for future research to overcome those limits and realize the promising potentials.

Invited speakers: John Leonard (MIT), Larry Jackel (North C Technologies), Dieter Fox (Washington University), Oliver Brock (TU Berlin), Pieter Abbeel (UC Berkeley), Walter Scheirer (University of Notre Dame), Raia Hadsell (Google DeepMind), Ashutosh Saxena (Cornell and Stanford University).

Co-organisers were Jürgen Leitner, Michael Milford, Ben Upcroft, Peter Corke (QUT, Brisbane), Pieter Abbeel (UC Berkeley), Wolfram Burgard (Uni Freiburg).

Visual Place Recognition: What is it good for? (RSS 2016)

This half-day workshop, co-organised with Ben Upcroft, Michael Milford (QUT, Brisbane), and Peer Neubert (TU Chemnitz), focussed on concepts and ideas for robust vision‐based place recognition in severely changing environments as well as discussing the extent to which place recognition is useful, or even required for robots.

Visual Place Recognition in Changing Environments (ICRA 2015)

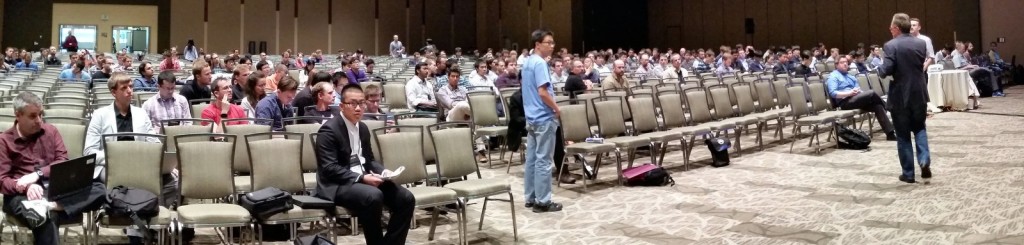

This half-day workshop at ICRA 2015 in Seattle built on the highly successful 2014 workshop of the same name at ICRA, and discussed novel concepts and ideas for robust vision-based place recognition in severely changing environments. Organised with Peter Corke and Michael Milford.

Around 130 people followed the invited talks and paper presentations in a large ballroom.

Visual Place Recognition in Changing Environments (CVPR 2015)

This workshop continued the discussion from the previous year at ICRA and addressed the computer vision community at CVPR. Organised with Peter Corke and Michael Milford (QUT, Brisbane), and Torsten Sattler (ETH Zürich).

Approximately 40 people came by for talks and poster presentations. It was great to interact with the authors and of course the invited speakers Josef Sivic and John Leonard at CVPR as well as David Cox and Chi Hay Tong at ICRA. Thanks everybody for contributing!

Visual Place Recognition in Changing Environments (ICRA 2014)

Organised with Peter Corke and Michael Milford (QUT, Brisbane). We discussed novel concepts and ideas for robust vision-based place recognition in severely changing environments. Such changes – induced e.g. by the time of day, weather or seasonal effects as well as human activity – are a ubiquitous challenge for all autonomous systems aiming at long-term operations in both indoor and outdoor settings.

We had 9 contributed papers, a tutorial given by Peter, and invited talks by Michael and Paul Newman.

Robust and Multimodal Inference in Factor Graphs (ICRA 2013

This full-day workshop brought together researchers working on novel approaches for modelling and inference in factor graphs. The goal of the workshop was to discuss techniques that introduce a larger robustness and allow incorporating multi-modal Gaussian or non-Gaussian measurements. New concepts of how to infere multi-modal posteriors were also in the scope of the workshop, as well as novel applications beyond the ubiquitous pose graph SLAM. I organised this workshop with John Leonard (MIT CSAIL) and Edwin Olson (University of Michigan)